Quality Dashboard

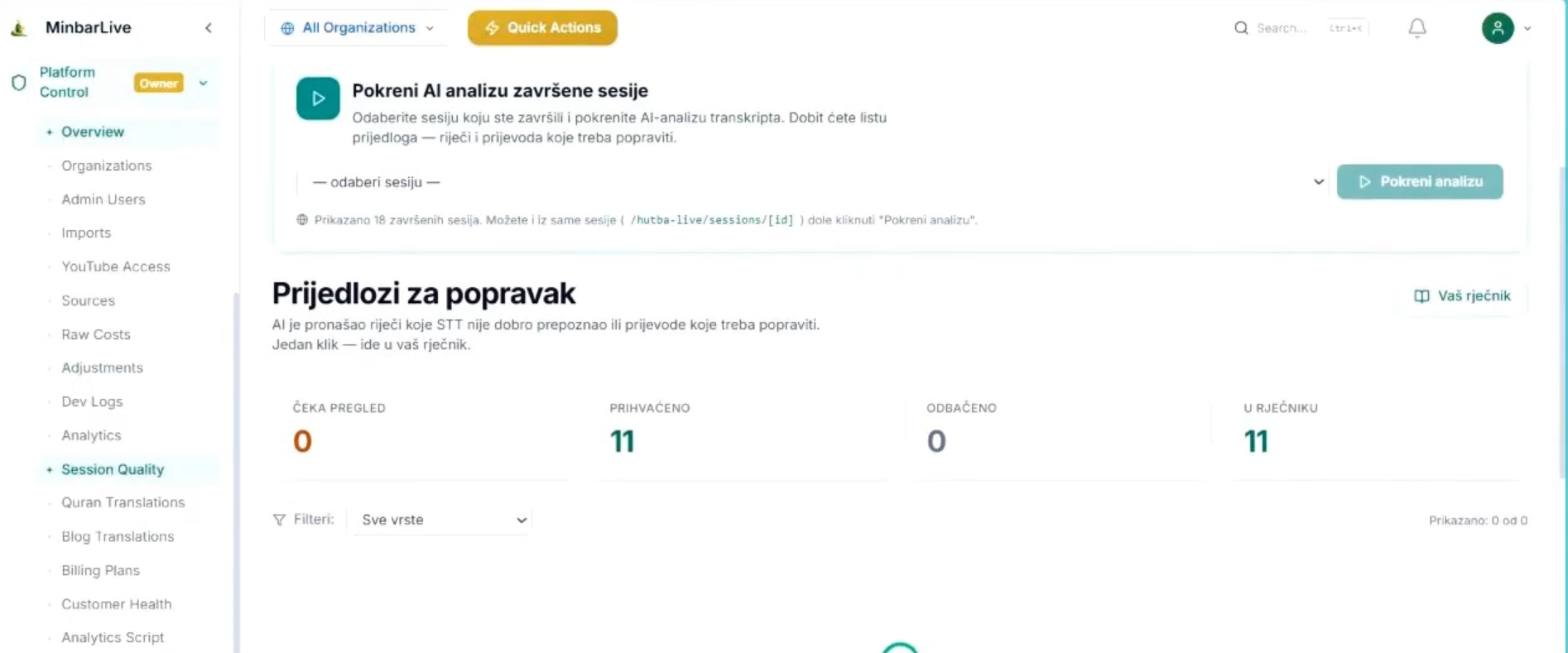

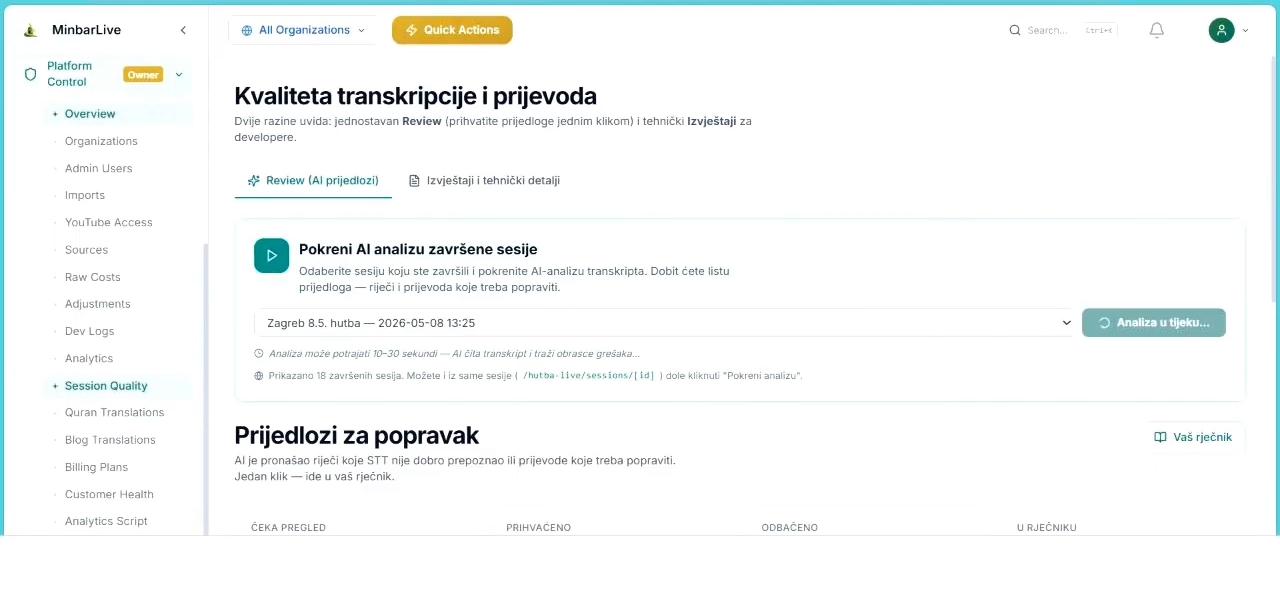

Use Quality Dashboard after a session when you want AI to review translation and transcription quality for you: run analysis on completed sessions, process suggested fixes, move approved terms into your dictionary, and keep a measurable improvement loop for future sessions.

Start AI quality analysis for completed sessions without heavy manual work